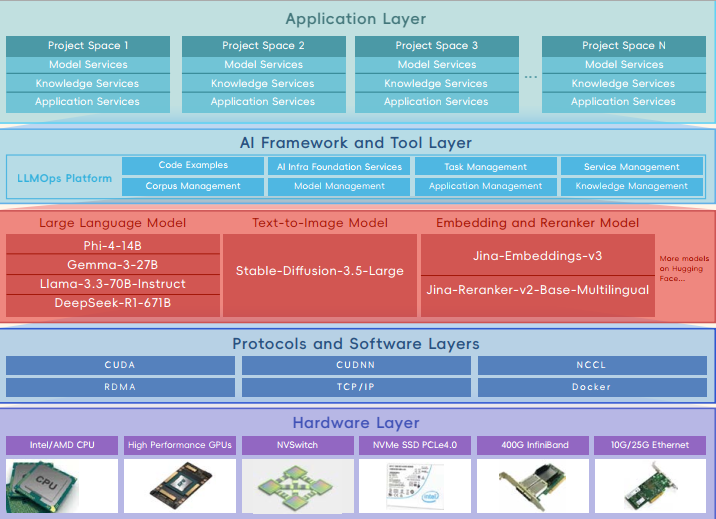

LLMOps, Upwards’s enterprise-level platform, manages the full lifecycle of large language models. It enables enterprises to implement these models into production and business processes in an agile, efficient, and closed-loop manner. The platform optimizes the entire workflow, including: corpus access and development, prompt engineering, model training, knowledge extraction and fusion, model management, application and agent development, operation and maintenance, monitoring and continuous improvement of business outcomes.

LLMOps supports the latest large language models such as Phi-4-14B, Gemma-3-27B, Llama-3.3-70B-Instruct, DeepSeek-R1-671B, text-to-image models like Stable-Diffusion-3.5-Large, embedding models like Jina-Embeddings-v3, reranker models like Jina-Reranker-v2-Base-Multilingual, and more from Hugging Face. It leverages GenAI’s strengths in natural language processing, efficient training and inference, and high-precision AI reasoning.

This platform supports the high computational demands of GenAI model training. It provides GPU-accelerated training for various LLM algorithms through optimized software and hardware engineering. We offer customizable packages tailored to customers’ needs and budgets.

Housed in a 1U standard chassis, each 400G IB switch delivers 64 NDR 400Gb/s InfiniBand ports. A single switch offers 51.2 Tb/s of aggregate bidirectional throughput and a packet forwarding rate exceeding 66.5 billion packets per second (BPPS). Supporting the latest NDR technology, it provides high-speed, low-latency, and scalable solutions.

It's not just about working smarter; it's about working better, together.

Contact Now© 2026 Upwards. All rights reserved